You might ask yourself if a computer could diagnose you better than a doctor. Analysis of medical AI accuracy compared to human clinicians reveals a split result. AI frequently outperforms humans in visual tasks like radiology. However, it matches or falls slightly behind in complex diagnostic reasoning. Currently, the highest accuracy comes from doctors using AI as a second opinion. This article explores exactly which medical tasks AI has mastered, where it still struggles, and why the human touch remains irreplaceable.

TL;DR (Too Long; Didn't Read)

- Best at: Reading images (X-rays, skin checks) and passing standardized exams.

- Average at: General triage and handling complex, messy patient histories.

- The Winner: Doctors working with AI outperform both doctors alone and AI alone.

- The Catch: AI can be biased and lacks the critical human intuition needed for safety.

What “accuracy” means in medical AI

Before declaring a winner, we need to understand the scorecard. Researchers comparing medical AI accuracy with human doctors do not just look at a simple pass or fail grade.

They measure performance using specific metrics. Understanding these will help you see the full picture. First, we review the most basic score everyone checks.

1. Diagnostic Accuracy

This is the most common metric. It simply measures the proportion of correct diagnoses. It counts both "true positives" (correctly identifying a disease) and "true negatives" (correctly identifying a healthy patient) out of all cases checked. But just getting the diagnosis right isn't enough. We need to know if it misses anyone.

2. Sensitivity (Catching the Disease)

This acts as the system's alarm bell. Sensitivity measures how well the AI or doctor catches patients who actually have the disease. High sensitivity means fewer missed cases. On the flip side, we also need to make sure we aren't scaring healthy people.

3. Specificity (Avoiding False Alarms)

This is just as important. Specificity measures how well the system avoids false alarms in people who do not have the disease. Low specificity can lead to unnecessary stress and expensive treatments for healthy people. To make sense of all this, statisticians use a combined score to rate reliability.

4. AUC (Area Under the Curve)

This is a statistical term for the overall ability to discriminate between disease and non-disease. A higher AUC number means the diagnostic performance is better and more reliable.

Note: Most studies compare AI against a group of expert clinicians looking at the exact same cases under controlled conditions to ensure fairness.

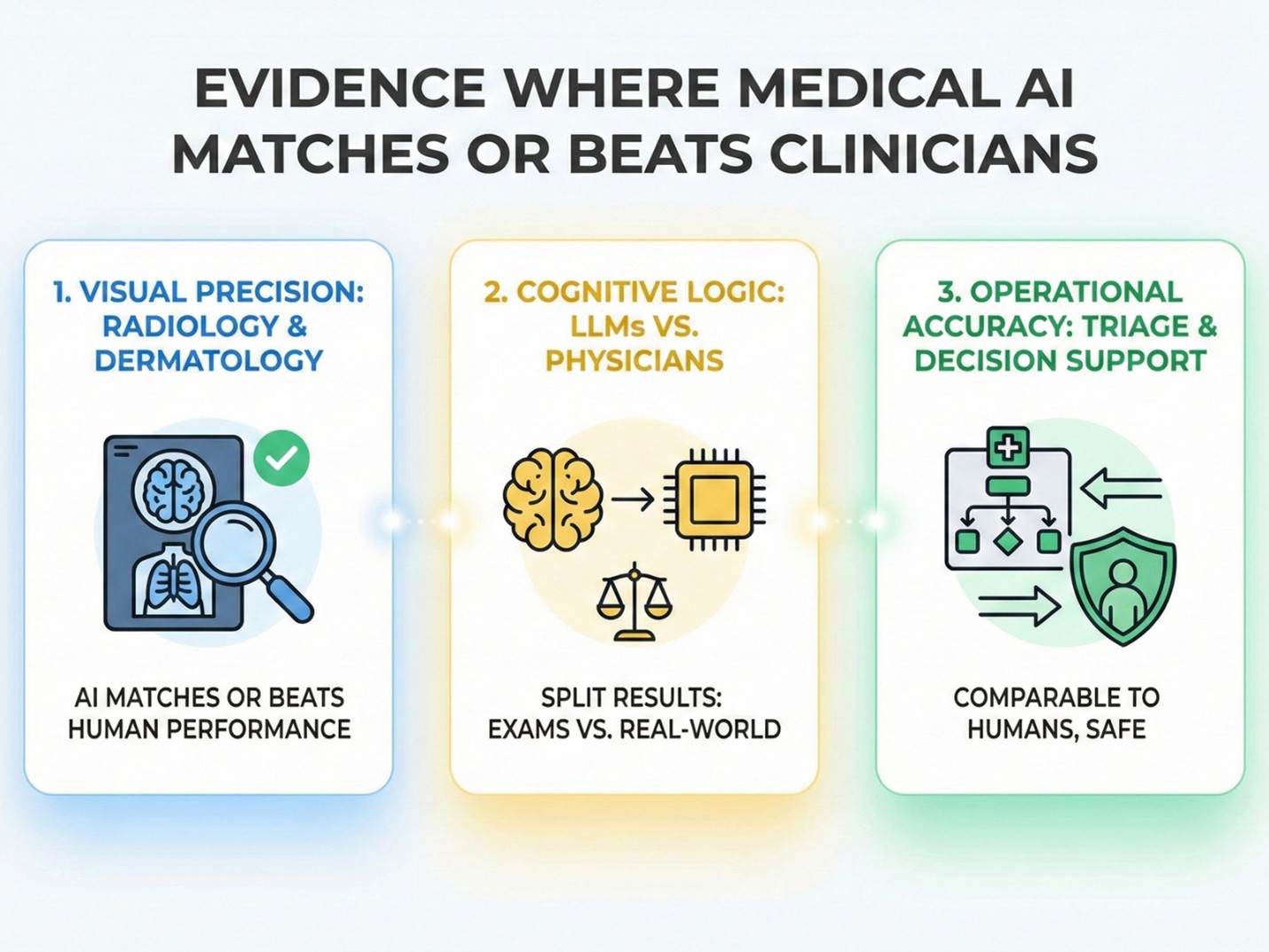

Evidence Where Medical AI Matches or Beats Clinicians

There are specific areas where machines are incredibly powerful. In tasks that require analyzing patterns or staring at pixels, AI in healthcare is proving to be a formidable competitor.

Here are the areas where AI is winning or tying with humans. The first and strongest evidence comes from fields that rely heavily on visual data.

1. Visual Precision: Radiology and Dermatology

Deep learning models have proven exceptionally skilled at spotting patterns hidden within medical images, often learning from thousands of X-rays faster than any human student could.

- Radiology and X-rays: Systematic reviews suggest that for diagnostic deep learning, AI is frequently on par with expert radiologists. Sometimes, it is even superior. Although sample sizes vary, the capability is undeniable.

- Visual Recognition: In specific, narrow tasks using convolutional neural networks, systems have hit 90–100% accuracy. For certain image recognition problems, they have actually surpassed the average performance of human clinicians.

Maximizing Visual Precision with AI Assistance

AI alone is powerful, but the highest level of visual precision happens when it partners with human expertise. Data confirms that AI-assisted diagnosis significantly outperforms both clinicians working alone and algorithms working in isolation. The table below details how this hybrid approach enhances detection rates in visual fields like cancer radiology.

Essentially, AI-augmented readers found more cancers. At the same time, they reduced the number of false alarms. This confirms that for focused imaging tasks with clean data, AI is a match for top specialists. However, seeing is only one part of the job. We also need to know how well AI competes regarding medical knowledge and logic.

2. Cognitive Logic: LLMs vs. Physicians

Moving beyond images, we have to ask a question. Can tools like ChatGPT actually diagnose a patient based on symptoms? Researchers are now pitting Generative AI and Large Language Models (LLMs) directly against doctors. The results depend heavily on the context.

- The 50/50 Split: A recent systematic review looking at 30 different studies found no clear winner. In about 33.7% of cases, professionals took the lead. LLMs came out on top in 33.3% of studies. The remaining comparisons were a draw.

- Medical Exams: Put AI in a test-taking environment, and it thrives. One study showed ChatGPT answering about 90% of medical exam questions correctly. It outperformed entire classes of medical students and even scored perfect marks in complex fields like neurology.

- Real World Struggles: Taking a test is not the same as treating a person. When we look at pooled diagnostic accuracy for generative AI across a wide range of studies, it hovers around 52.1%.

This distinction matters. AI can ace a multiple-choice exam. Yet, the nuance of real-world clinical cases remains a hurdle. Beyond complex diagnosis, we also checked how accurately AI handles the initial sorting of patients in busy environments.

3. Operational Accuracy: Triage and Decision Support

In the chaotic environment of a hospital, AI is often used to triage patients or suggest diagnoses. Does this actually help doctors make better calls? The answer isn't a simple yes.

- No Major Jump: One open-label study found that adding AI support didn't significantly change the overall accuracy for physicians (57.4% with AI vs. 56.3% without).

- Safety First: On the other hand, comparing an AI triage system against human doctors showed something interesting. The AI managed to achieve levels of accuracy and safety that were comparable to human doctors.

This suggests that AI isn't a magic wand that instantly fixes diagnostic errors. It can serve as a safe and reliable front-end tool for symptom checking, provided the system is rigorously validated.

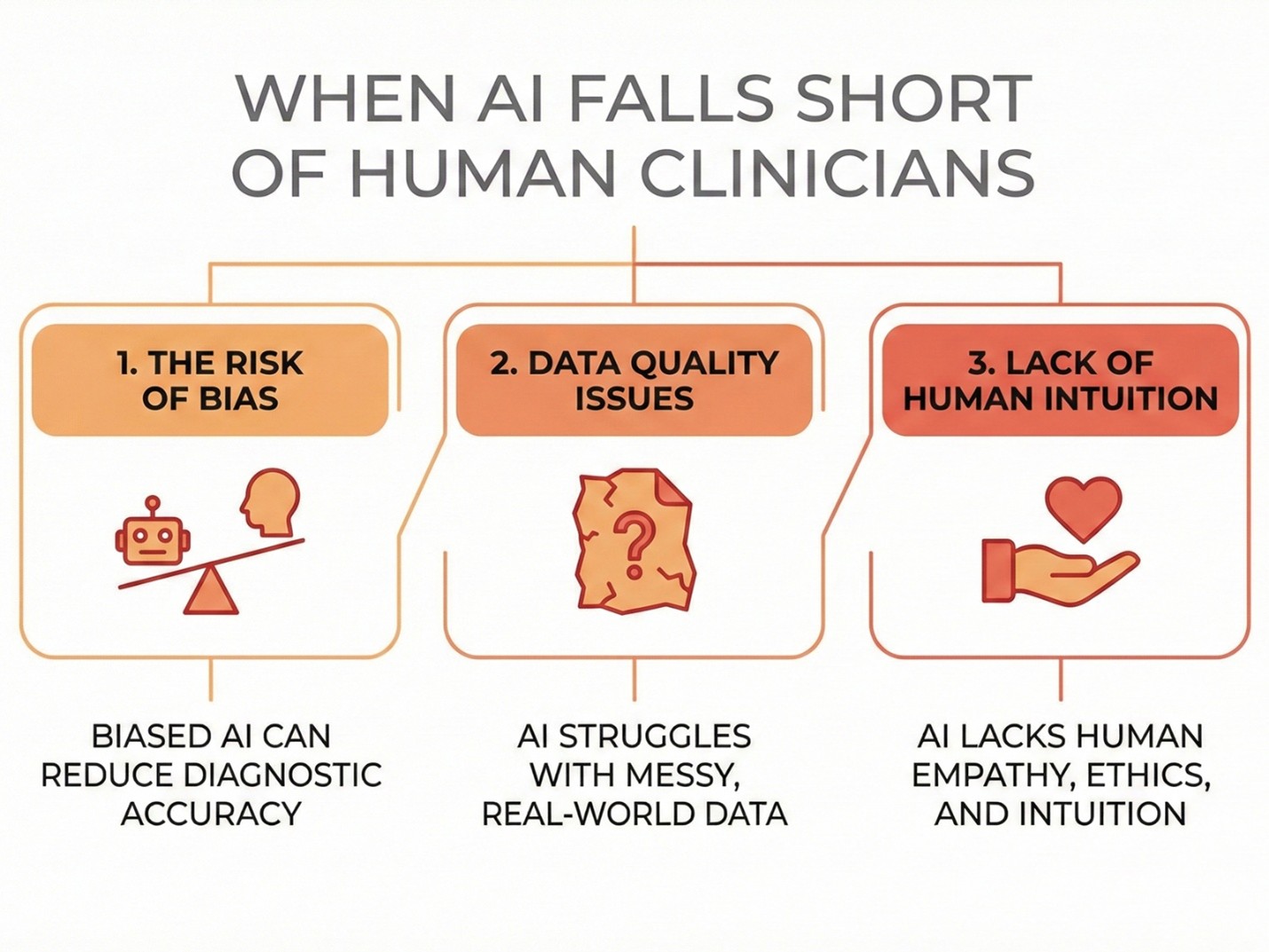

When AI Falls Short of Human Clinicians

It is not all good news. Despite the hype, there are significant limitations to medical AI accuracy. Technology is powerful, but it can be fragile. The first major crack in the armor appears when the system learns the wrong lessons.

1. The Risk of Bias

AI can sometimes mislead doctors. A study on hospitalized patient diagnosis showed a scary result. Biased AI recommendations actually reduced clinicians’ diagnostic accuracy by about 9 percentage points.

If a doctor trusts a flawed AI too much, the patient outcome can get worse. Besides bad habits, these systems also struggle when the information they get isn't perfect.

2. Data Quality Issues

AI loves clean, organized data. Real life is messy.

- Systematic reviews emphasize that performance is highly variable.

- Accuracy often drops when moving from "clean" benchmark data to messy clinical data.

- Many studies have a "high risk of bias" because they are tested in labs, not in real hospitals. This leads us to the specific skills where a computer simply cannot compete with a human touch.

3. Lack of Human Intuition

There are things a computer simply cannot calculate yet. Human clinicians possess skills that are critical for patient care:

- Handling Messy Info: Doctors can integrate incomplete or conflicting notes, family history, and social context.

- Intuition: Experienced doctors have a "clinical intuition" for atypical cases.

- Empathy and Ethics: Managing uncertainty, communicating difficult news, and shared decision-making are human traits. AI cannot hold a patient's hand or understand their fear.

Human–AI Collaboration: Where Accuracy is Highest

So, who wins the battle? The answer is simple. Neither. The winner is the team.

Across almost every study, the most promising pattern is human–AI collaboration. Combining the speed of AI with the judgment of a human produces superior results.

- Skin Cancer Diagnosis: Clinicians assisted by AI achieved higher sensitivity and specificity than clinicians alone. The biggest improvement was seen in non-dermatologists (general doctors), effectively narrowing the expertise gap.

- Radiology Success: In cancer radiology, pooled analyses show that AI assistance improves detection rates. It helps doctors spot subtle findings they might have missed with the naked eye.

- Liver Cancer Detection: For hepatocellular carcinoma, AI models were generally more sensitive, while experts maintained high specificity. Combining these strengths yields the best diagnostic performance.

The strongest evidence today supports AI not as a replacement, but as a powerful diagnostic aid.

Bottom line: How Accurate is Medical AI vs. clinicians?

Let’s summarize the reality of AI in healthcare today.

1. Specialized Tasks: In narrow, data-rich tasks like reading X-rays or answering exam questions, medical AI can reach or even exceed expert-level accuracy.

2. General Diagnosis: Across broader, more realistic tasks, AI performs about the same as clinicians on average. It is hit or miss.

3. The Gold Standard: In actual clinical practice, the best results come from AI + Clinician collaboration. This improves accuracy while keeping the human safety net.

We are moving toward a future where your doctor will likely use AI as a "second opinion" right in the exam room. This partnership promises a future with fewer errors and better health outcomes for everyone.

Do you have more questions about how technology is changing healthcare? Feel free to contact us or browse our other articles for the latest updates on medical innovation.

Frequently Asked Questions (FAQ)

1. Can AI replace doctors completely?

No. AI lacks the empathy, human intuition, and complex judgment required for safe, real-world patient care.

2. Is medical AI safe to use for diagnosis?

Generally, yes, but only when used as a support tool under strict human supervision to catch potential software biases.

3. What is the most accurate use of AI in medicine right now?

Image-based diagnosis, specifically in radiology and dermatology (like skin cancer detection), is currently the most accurate application.

4. Does AI help doctors make fewer mistakes?

Yes, combining AI speed with human oversight significantly reduces missed diagnoses and decreases false alarms compared to doctors working alone.

.jpg)

.jpg)