The fundamental difference between Research AI and Clinical AI comes down to their primary application, regulatory requirements, and direct impact on patient safety. Research AI operates in controlled laboratory environments to discover new medical knowledge, process massive genomic datasets, and accelerate pharmaceutical development without directly interacting with real patients. In stark contrast, Clinical AI is a highly regulated, thoroughly validated technology deployed in hospitals and clinics to assist healthcare professionals with real-time diagnostics, patient flow management, and personalized care decisions.

Understanding this distinction is not just an academic exercise for medical professionals. For healthcare facilities across the globe, confusing experimental algorithms with clinical-grade infrastructure can lead to critical system failures and compromised patient safety. Healthcare organizations face persistent challenges that slow innovation, ranging from fragmented systems to poor usability in critical moments.

By distinguishing between these two forms of artificial intelligence, healthcare leaders can build smarter, more efficient care systems. This comprehensive guide will explore the exactly numbered technical, ethical, and operational factors that differentiate these vital technologies.

1. Operating Environments and Target End-Users

The most immediate difference between these two forms of artificial intelligence is where they live and who uses them on a daily basis. They serve entirely different professional demographics and operate under completely different environmental pressures.

Research AI represents the cutting edge of scientific discovery and data exploration. These intelligent systems are built to process enormous volumes of unstructured biological data, academic literature, and clinical trial results. They are deployed in long-cycle research environments, biotech firms, and pharmaceutical companies. The primary users are data scientists, researchers, and pharmacologists who operate in controlled, low-stress environments where time is measured in months or years.

Clinical AI, on the other hand, is the application of intelligent systems in a live, operational healthcare environment. It is designed to empower clinicians, organizations, and innovators with tools built to enhance patient care and operational excellence. The end-users are physicians, triage nurses, and hospital administrators operating in high-pressure, time-sensitive environments like emergency rooms, where split-second decisions save lives.

Adapting to the End-User Workflow

In a clinical setting, healthcare professionals cannot afford to spend hours interpreting data. The user experience (UX) must be flawless. Clinical systems require exceptional, clinical-grade UX and complete transparency so that doctors can glance at a screen and immediately understand the patient's status. Research systems, however, often rely on complex dashboards that require a deep background in data science to navigate.

2. Tolerance for Algorithmic Error and Patient Safety

When we discuss the difference between Research AI and Clinical AI, the concept of error tolerance is arguably the most critical factor. How a system handles a mistake defines its classification.

Because research algorithms are isolated from real-time patient care, they operate with a significantly higher tolerance for error. Mistakes or "hallucinations" in Research AI are often part of the scientific learning process. The primary goal of these models is to generate new hypotheses. If an AI suggests a chemical compound that ultimately fails in a simulation, no one is harmed. It is simply one more data point guiding scientists toward the right answer.

The Zero-Harm Policy in Clinical Environments

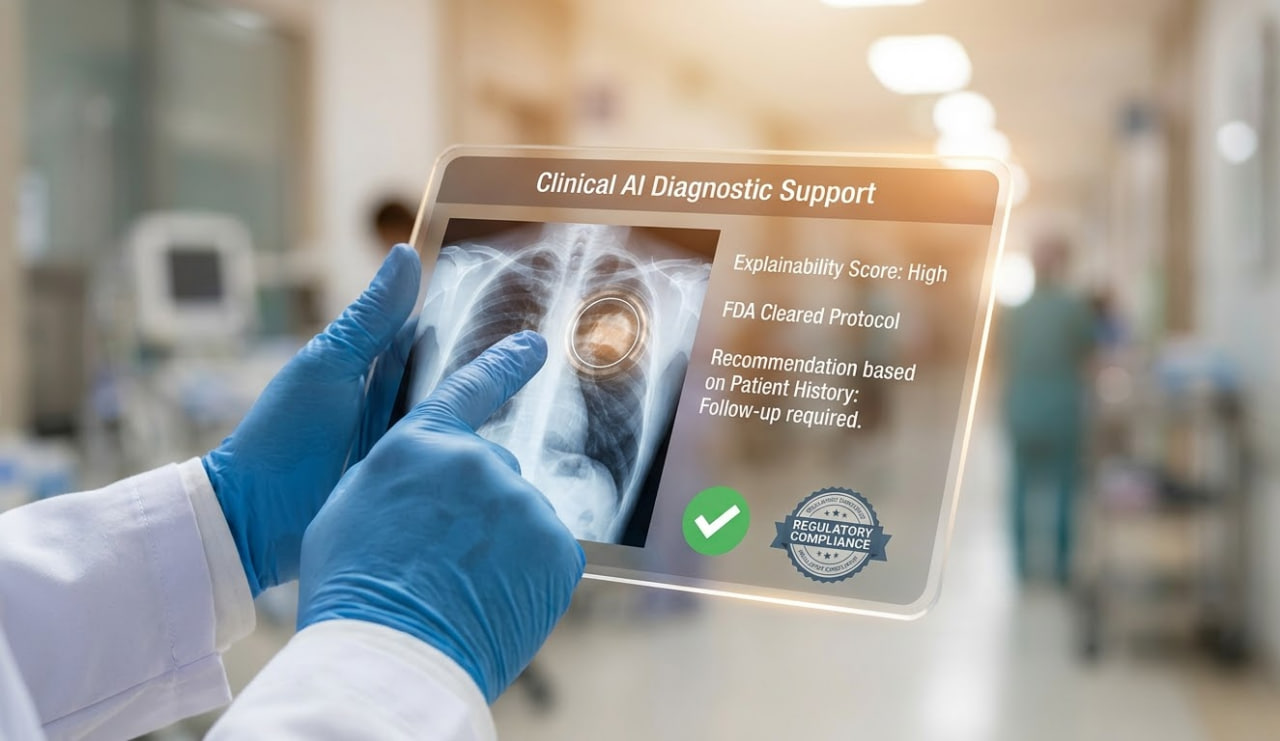

In contrast, Clinical AI operates under a near-zero error tolerance policy. When an algorithm is used to suggest a triage priority, highlight an anomaly on an X-ray, or recommend a medication dosage, failure is simply not an option. A false positive or false negative in a live hospital setting can directly impact patient safety, leading to improper treatments or delayed care.

Therefore, clinical systems must maintain high standards of precision, accountability, and strategy. They undergo rigorous testing against massive datasets of real-world patient profiles to ensure they do not exhibit bias or critical failure rates when exposed to diverse human populations.

3. Regulatory Oversight and Strict Medical Approvals

You cannot simply download an algorithm from the internet and begin diagnosing patients. The regulatory landscape surrounding these two technologies is vastly different, reflecting the level of risk associated with each.

Research AI generally faces moderate regulatory oversight. The focus in the laboratory is primarily on data integrity, ethical research practices, and ensuring that any human data used for training is thoroughly anonymized. Because these tools do not diagnose or treat, they do not require the highest tier of medical device approvals.

Navigating FDA and Health Canada Compliance

Clinical AI is a heavily scrutinized, highly regulated medical device. To legally operate in a hospital or clinic, these systems must undergo years of clinical trials and validation. They require secure-by-design architectures and privacy-focused data governance to protect sensitive patient records.

Systems must be designed from the ground up to align with regulatory frameworks. For example, any diagnostic software used in North America must receive explicit clearance from the FDA or adhere to the strict guidelines provided by Health Canada’s Medical Device Directorate. These organizations demand undeniable proof that the AI improves patient outcomes without introducing new risks.

4. The Critical Need for Explainability and Clinical UX

One of the most profound technological differences lies in the concept of "Explainable AI" (XAI). In the research sector, algorithms often function as a "black box." A deep learning model might process millions of genetic markers and output a prediction without clearly explaining how it arrived at that conclusion. In a lab, if the output is scientifically useful, the lack of transparency might be acceptable.

In a hospital, a black box is a massive liability. Clinical systems require complete transparency. Physicians cannot and will not rely on mysterious algorithms when making life-or-death decisions.

Ethical Intelligence and Accountability

Ethical intelligence is a core principle in clinical environments. Clinical AI must provide clear, explainable reasoning for every recommendation it makes. This bias awareness and responsible deployment ensure that healthcare professionals remain the ultimate decision-makers.

If a clinical system flags a patient for an urgent intervention or suggests a specific Personalized Patient Care plan, the clinician must instantly understand the data points that triggered the alert. It must highlight the exact pixel on the MRI or the specific blood test result that caused the alarm. This human-centered design is what makes clinical-grade systems actually usable, safe, and trustworthy in real-world scenarios.

Ready to overcome fragmented medical systems and integrate secure, compliant technology?

InnoMed designs interconnected platforms and infrastructure, not isolated features. Contact our team today to explore our advanced healthcare technology stack and discover how we can elevate your clinical workflows.

5. System Integration vs. Isolated Standalone Tools

A major reason why AI implementations fail in healthcare is short-term thinking. Healthcare organizations often purchase disposable products or standalone tools to solve single problems. This leads to a fragmented technology stack that frustrates doctors and slows down patient care.

Research AI often functions perfectly well as a standalone tool. A biotech firm might use an isolated software program specifically to map proteins. It does not need to talk to the billing department or the pharmacy.

Building Interconnected Healthcare Platforms

Clinical AI cannot survive in isolation. True clinical intelligence requires a partner for long-term infrastructure. We must design solutions for environments where failure is not an option, prioritizing durability and scalability.

Unlike its research counterpart, Clinical AI must be integrated directly into the healthcare technology stack. It must communicate seamlessly with live Electronic Health Records (EHR), hospital billing software, and laboratory information systems. Healthcare works best when systems work together, integrating AI, software, data, and hardware into one unified ecosystem. By taking a systems-level approach, hospitals achieve higher clinical adoption and stronger security.

6. The Challenge of Translational AI from Lab to Clinic

Understanding the difference between Research AI and Clinical AI requires understanding the bridge between them, known as Translational AI. One of the biggest bottlenecks in modern medicine is moving successful algorithms out of the research lab and securely into the hospital.

Many algorithms perform flawlessly on clean, perfectly formatted research datasets. Data scientists spend months curating these datasets, ensuring lighting is perfect in every X-ray and every data point is correctly labeled.

However, they fail catastrophically when exposed to the messy, incomplete, and unpredictable data found in a real-world clinic. A patient might move during a scan, a doctor might use shorthand in a medical note, or a piece of equipment might be outdated.

Overcoming Interoperability and Security Issues

To successfully transition to the clinical side, technology must address ongoing security and interoperability issues. Data integrity, privacy, and compliance are non-negotiable.

Whether supporting Advanced Diagnostics or managing population-scale health initiatives for government sectors, clinical systems must be robust enough to handle real-world noise without compromising patient safety or violating strict public-sector data requirements. They must filter out bad data, alert the physician to missing information, and maintain total operational security.

7. Real-Time Action vs. Long-Term Discovery

The final key factor is the operational time horizon. Research AI is built for long-term discovery. Analyzing global public health data to predict population-scale health trends, or simulating chemical interactions for drug discovery, can take weeks of continuous computing power. The answers are not needed immediately.

Clinical AI is entirely built around real-time action. When a trauma patient arrives at a hospital, the medical team needs answers in seconds. The intelligence must be applied instantly to distribute demand efficiently and save lives.

Real-World Application: Optimizing Emergency Care

A perfect example of applied clinical intelligence is the management of emergency room congestion. Emergency access under pressure is one of the most critical challenges in healthcare. Traditional systems leave patients confused and hospital staff overwhelmed.

This is where Waitless ER changes the paradigm. Developed by InnoMed, this public-facing platform applies real-time data, system orchestration, and user-centered design to solve ER bottlenecks.

By utilizing clinical-grade artificial intelligence, Waitless ER provides the following immediate benefits:

- Real-Time Wait Time Intelligence: Provides live visibility into emergency and urgent care availability across multiple facilities.

- Zero-Friction Access: Allows immediate patient access without complex onboarding or login barriers during high-stress moments.

- Care Guidance & Triage Support: Offers clear, human-centered guidance, directing patients to the most appropriate care facility based on their symptoms and current hospital capacities.

- Demand-Aware Optimization: Adapts system behavior dynamically during peak periods, such as flu season or holidays, preventing any single facility from becoming critically overwhelmed.

By guiding patients to appropriate care paths without panic or friction, this clinical AI solution improves overall system flow and ensures that medical professionals can focus on treating patients rather than managing crowds.

The Future of Intelligent Healthcare Infrastructure

The healthcare industry is moving rapidly toward a future of personalized medicine and real-time operational intelligence. However, success depends entirely on choosing the right type of technology for the right environment. Organizations must ensure that their AI-driven systems prioritize transparency and human accountability.

Research AI will continue to push the boundaries of what is medically possible, discovering new cures, uncovering genetic mysteries, and accelerating the pace of pharmaceutical innovation. Meanwhile, Clinical AI will become the invisible, reliable backbone of modern hospitals, ensuring those new treatments are delivered safely, equitably, and efficiently.

To understand the broader implications and ethical requirements of implementing these systems, healthcare leaders should continually refer to the World Health Organization's guidance on AI Ethics, ensuring innovations actually serve the clinical needs of patients and providers alike.

By trusting in systems built for long-term reliability rather than short-term fixes, healthcare professionals can stop fighting with fragmented software and return to what they do best: saving lives.

Let’s Build the Future of Intelligent Systems Together. Trusted by over 5,000 healthcare professionals across Toronto, InnoMed delivers 99.98% uptime and 40% faster workflow efficiency.

Contact InnoMed today to schedule a consultation and transform your clinical infrastructure.

Frequently Asked Questions (FAQ)

1. What is the main difference between Research AI and Clinical AI?

The main difference is their application, environment, and regulatory oversight. Research AI is used in laboratories by data scientists to discover new medical knowledge (like drug discovery) without strict regulatory oversight. Clinical AI is highly regulated, scientifically validated software used directly in hospitals by doctors and nurses to diagnose patients and manage real-time medical care.

2. Can an algorithm used successfully in research be immediately used in a hospital?

No. Moving an algorithm from a laboratory to a clinical setting is a complex process known as Translational AI. It requires extensive validation, clinical trials, and user-interface optimization. The AI must prove its safety, explainability, and reliability on messy, real-world patient data before receiving mandatory regulatory approval from bodies like the FDA or Health Canada.

3. How does Clinical AI improve emergency room efficiency?

Clinical AI platforms, such as Waitless ER, analyze live hospital data to predict patient influx, optimize triage processes, and provide real-time wait visibility to the public. This intelligence reduces facility congestion, distributes patient loads evenly across healthcare networks, and guides patients to the right care paths without friction or confusion.

4. Why is "explainability" so important in Clinical AI systems?

In a clinical setting, doctors make life-saving decisions based on AI recommendations. Explainability (or XAI) ensures the AI provides clear, transparent reasoning for its outputs. This allows the physician to verify the data, trust the system, and maintain legal and ethical accountability. A "black box" system that gives answers without showing its work is too dangerous for patient care.

5. How does InnoMed ensure the security of clinical data systems?

Security, privacy, and regulatory compliance are integral to our operations from day one. We utilize secure-by-design architectures, privacy-focused data governance, and ethical AI frameworks. Our interconnected platforms are built to meet the highest global healthcare standards and regulatory compliance requirements, ensuring patient data is never compromised.