When patients hear that AI is being used in radiology, the first reaction is often simple: is a machine reading my X-ray instead of a doctor?

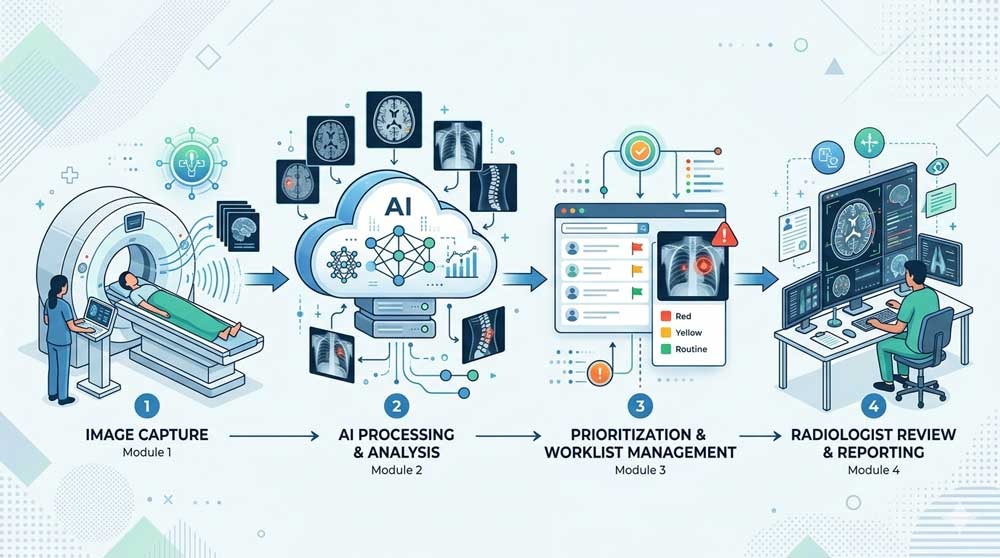

At Innomed, this is the exact question we see come up again and again across Canada. The answer is more practical than dramatic. In many Canadian hospitals and imaging centers, AI already helps analyze X-rays, CT scans, mammograms, MRIs, and ultrasound studies. It flags urgent findings, helps sort worklists, improves image quality, and even drafts parts of radiology reports. But in real clinical workflows, AI does not replace the radiologist. It supports them.

Quick Answer:

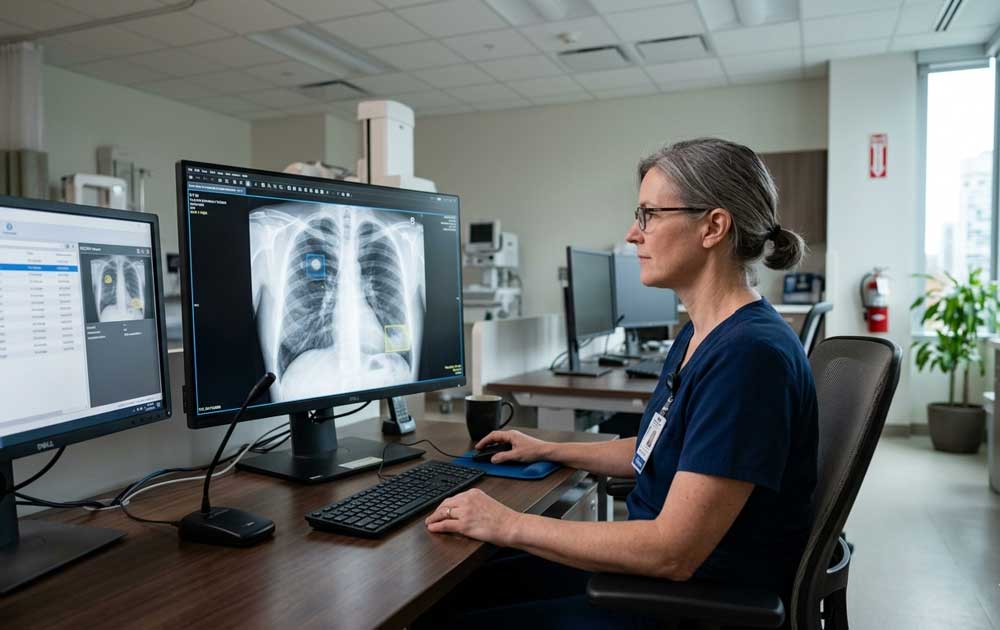

Yes, AI can read patterns in an X-ray. It already does this in many clinical settings. But it works as an assistive tool, not the final decision-maker. A radiologist or another qualified physician still reviews the image, interprets the findings in clinical context, and signs the report.

How Does AI Read Medical Images?

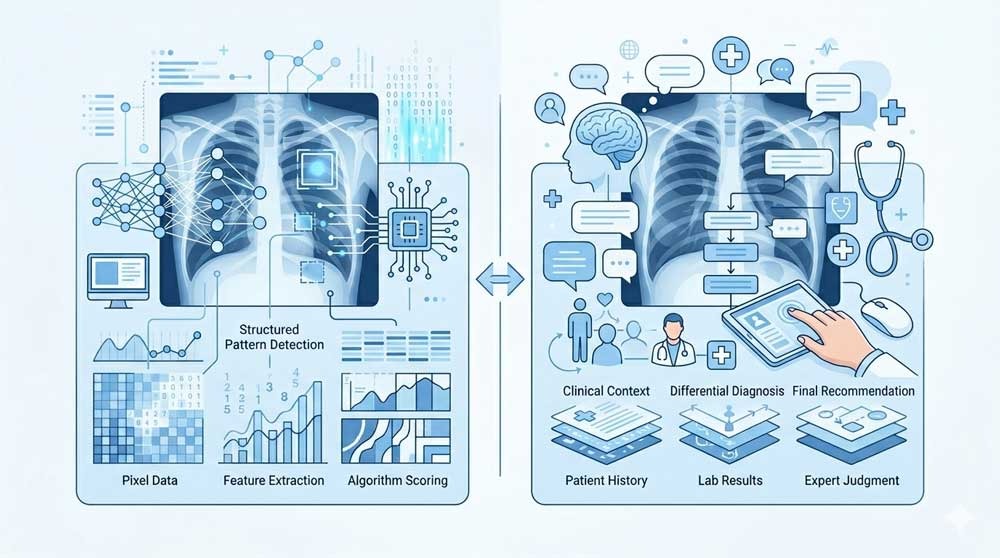

AI does not look at an X-ray the way a person does. A radiologist reads an image through training, pattern recognition, anatomy knowledge, disease knowledge, prior studies, clinical history, and judgment. AI reads the image through a trained model built on large sets of labeled images. Those labels usually come from expert annotations, reports, or structured datasets created by radiologists and clinical teams.

What AI “Sees” vs What a Doctor Sees

An AI model breaks the image into mathematical features. It learns pixel relationships, textures, shapes, densities, and spatial patterns linked to specific abnormalities. In chest X-rays, for example, an AI system might score the likelihood of pneumothorax, pneumonia, pleural effusion, or other thoracic findings. A radiologist, by contrast, does more than detect a pattern. The radiologist asks whether the finding fits the patient’s symptoms, whether a prior image shows stability, whether a technical artifact is fooling the model, and whether the image tells only part of the story.

That difference matters. AI often performs best on narrow, well-defined tasks. Human readers perform better when context, nuance, tradeoffs, and unusual presentations matter. RSNA coverage in 2025 described the goal as a partnership between expert radiologists and transparent AI systems, with both together performing better than either alone.

Types of Scans AI Can Analyze

Patients often associate imaging AI with chest X-rays, but Health Canada’s list of authorized AI-enabled medical devices shows far broader use across radiology. AI-enabled tools appear in X-ray, CT, MRI, ultrasound, mammography, workflow software, reconstruction systems, and segmentation platforms. This list is updated periodically and includes devices cleared for use within the Canadian healthcare system.

In practice, AI may help detect abnormalities, prioritize urgent cases, improve image quality, and assist with report drafting, depending on the specific system in use.

Even so, the exact role depends on the tool. One product might help detect a pneumothorax on chest radiographs. Another might improve MRI reconstruction. Another might measure cardiac function on ultrasound. “AI imaging” is not one single product or one single capability.

Is AI Better Than a Radiologist at Reading X-Rays?

In clinical practice, AI and radiologists are not competing roles. They work together.

What the Research Says

Some studies show strong performance for AI on specific imaging tasks. RSNA reported in 2024 on a hospital-based study where an AI tool correctly excluded pathology in a substantial share of unremarkable chest X-rays at high sensitivity thresholds, with lower rates of critical misses than the radiology reports linked to those images. But the same report also noted an important caution: when AI did make mistakes, those errors were on average more clinically severe, because AI lacked the clinical scenario a radiologist uses during interpretation.

Another 2025 prospective clinical evaluation from JAMA Network Open studied a generative AI model integrated into live radiology workflow for plain radiographs. The model generated draft reports from images plus basic clinical data. Radiologists then reviewed and edited those drafts within their standard reporting software. The study found a 15.5% documentation efficiency gain with no decrease in clinical accuracy on peer review. In other words, AI helped radiologists work faster in that setting without lowering report quality.

Those findings fit the broader direction of the field. AI often works well when the task is focused and the workflow is designed carefully. It is less trustworthy when people assume performance in one dataset or one hospital automatically transfers everywhere else. Even strong internal testing does not remove the need for real-world validation, monitoring, and clinician oversight.

Where AI Excels, and Where It Falls Short

AI tends to perform well in repetitive pattern-detection tasks, worklist prioritization, image enhancement, and report drafting for common studies. It helps when radiology departments are dealing with heavy volume and need support with routine cases or urgent flagging. RSNA has also highlighted AI’s value in reducing burden from high-volume chest radiography and in improving workflow around unremarkable exams.

Its limits are just as important. AI does not know how the patient looks in the room. It does not hear the clinician’s concern unless that information is structured and fed into the system. It may be tripped up by unusual anatomy, poor positioning, rare disease, different equipment, or a patient population that differs from the data used in training. This is why safety programs and governance efforts, such as the Canadian Association of Radiologists (CAR) AI initiatives, have become a serious part of adoption.

What This Means for You as a Patient

From the patient side, AI in imaging usually changes the process more than the relationship. You still have an exam. The images still go through the radiology system. A clinician still uses the final report for medical decisions. The difference is that AI may sit in the background helping sort, annotate, score, reconstruct, or draft information before a human signs off.

Does Your Hospital Already Use AI for Imaging?

Possibly. Many health systems and imaging vendors now include AI-enabled tools in their imaging software, scanners, or radiology workflow platforms. Health Canada’s authorized device list shows a large and growing number of tools, many in radiology. But adoption is uneven. One hospital may use AI for stroke triage on CT, another for chest X-ray flagging, another for mammography support, and another for image reconstruction only.

If you want a direct answer, ask the radiology department or imaging center. A simple question works: “Do you use AI-assisted analysis for this type of scan, and is the image still reviewed by a radiologist?” That gets you farther than asking whether the hospital “uses AI” in general.

Will AI Change How You Get Test Results?

Sometimes, and often in ways that prioritize your safety. One of the most significant impacts of AI in Canadian hospitals is its ability to act as an automated "sorting" system. In provinces facing imaging backlogs, AI can scan images the moment they are taken to flag life-threatening conditions, like a stroke or a collapsed lung. By moving these urgent cases to the top of a radiologist's queue instantly, AI helps ensure that patients in critical need don't have to wait behind routine exams, effectively speeding up care when every second counts.

The Safety Net: Why Doctors Still Review Every Image

This is the part patients should remember most clearly: in Canada, medical and legal responsibility always rests with a human being. While AI acts as a sophisticated second pair of eyes, it operates under a "physician-led" model. This means that any pattern the AI flags is treated only as a suggestion until a licensed radiologist validates it. By filtering every automated insight through a doctor’s professional judgment and your specific medical history, the system ensures that technology enhances accuracy without ever removing the human accountability that is central to Canadian healthcare.

Frequently Asked Questions

Will AI read my X-ray instead of a doctor?

In standard clinical practice, no. AI assists with detection, prioritization, or report drafting, but a radiologist or qualified physician still reviews the image and finalizes the interpretation.

Is AI imaging available at my local hospital?

It might be, but use varies by hospital, imaging vendor, and scan type. Many Health Canada-authorized AI tools exist in radiology, though adoption is not uniform across all provinces.

Does AI imaging cost more?

No. In the Canadian public healthcare system, medically necessary imaging is covered by provincial health plans (like OHIP, MSP, or AHCIP). AI is part of the hospital's internal workflow and does not result in a separate charge for the patient.

Is AI better than a radiologist?

On narrow tasks, AI can perform strongly. In full clinical interpretation, radiologists still add context, judgment, and accountability. Current evidence supports collaboration more than replacement.

Will AI help me get results faster?

Sometimes. AI can speed prioritization and reduce documentation time in some workflows, but total turnaround still depends on the clinical setting and staffing levels.

What should I ask before my scan?

Ask whether AI is used for your exam type, whether a radiologist still reviews the images, and how results will be communicated to you. These questions are practical and usually easy for imaging staff to answer.

.jpg)