Artificial intelligence is becoming more common across healthcare, from imaging support and clinical documentation to triage, prediction models, and workflow automation. As these systems become more embedded in care delivery, hospitals and healthcare leaders are asking a more practical question:

What happens when medical AI faces an error or makes a mistake?

This is not a theoretical concern. No clinical system performs perfectly, and AI is no exception. A model may miss a serious condition, trigger a false alert, recommend the wrong priority level, or produce an output that appears confident but is clinically misleading. When that happens, the impact is not only technical. It becomes clinical, operational, legal, and reputational.

At Innomed, this is one of the most important issues in healthcare AI adoption. Organizations often focus heavily on performance metrics during procurement, but many spend far less time preparing for failure modes. That is a serious gap. In healthcare, mistakes are not edge cases to ignore. They are part of the deployment reality that must be planned for from the beginning.

Short Answer:

When medical AI makes a mistake, the consequences depend on where the tool was used, how much clinical oversight existed, and whether the organization had proper safeguards in place. In most cases, responsibility does not fall on the AI alone. It falls on the healthcare system, workflow, governance, and human decision-making structure around it.

Why Does This Question Matters More Than “How Accurate Is It?”

A lot of healthcare AI conversations focus on whether a model is accurate enough to be useful.

That matters, but it is not enough.

Every clinical system needs to be evaluated not only by how often it performs well, but also by what happens when it fails. In healthcare, even a low-frequency error can create significant harm if it occurs in the wrong place, reaches the wrong user, or enters a workflow without sufficient review.

This is what makes AI different from many ordinary software tools.

If a scheduling system fails, the result is operational disruption. If a medical AI system fails, the result may affect diagnosis, treatment timing, escalation decisions, patient trust, or clinical accountability.

That is why responsible healthcare organizations do not ask only, “How accurate is this model?” They also ask, “What is the failure pattern, and what happens when it is wrong?”

Medical AI Mistakes Usually Fall Into a Few Core Categories

Not all AI mistakes look the same, and not all of them carry the same level of risk.

Some errors are direct and visible. Others are subtle and much harder to catch.

False Negatives Are Often the Most Dangerous

A false negative happens when the AI fails to detect something clinically important.

This is one of the most serious failure types because it creates a false sense of safety. If an imaging tool fails to flag an abnormality, or a deterioration model does not surface a high-risk patient, the care team may proceed without realizing something critical was missed.

The danger is not only the missed output itself. The danger is what the workflow assumes because of that missed output.

If clinicians begin to trust a tool as a reliable screening layer, false negatives become more consequential because they shape what gets reviewed closely and what gets deprioritized.

This is why healthcare AI tools should never be assessed only by average performance. In many use cases, the real question is how dangerous the misses are, not simply how many there are.

False Positives Create a Different Kind of Risk

A false positive may seem less dangerous at first, but in healthcare systems, it creates its own serious problems.

When AI flags too many patients, abnormalities, or risk events that are not truly actionable, the result is often workflow overload. Teams become desensitized. Alerts lose credibility. Clinicians spend time chasing low-value outputs instead of focusing on what matters most.

Over time, this creates a deeper operational issue: loss of trust.

Once clinicians begin to see a system as noisy or unreliable, even the correct outputs become easier to ignore. That is one of the most common reasons technically capable AI tools fail in practice. The model itself may not be broken, but the signal-to-noise ratio damages adoption.

This is why AI mistakes should not only be evaluated in terms of patient harm. They should also be evaluated in terms of workflow burden and trust erosion.

Some AI Errors Are Not Wrong Outputs, They Are Wrong Influence

This is one of the most overlooked risks in medical AI.

Sometimes the system does not produce an obviously false answer. Instead, it subtly influences human decision-making in the wrong direction.

That might happen when:

- a recommendation appears more certain than it should,

- an output is framed in a way that biases clinician interpretation,

- or a prioritization score changes how urgently a case is reviewed.

These are not always easy to classify as “model errors” in a traditional technical sense. But in practice, they are still harmful if they shape judgment in ways that lead to worse decisions.

This is why healthcare organizations need to think beyond simple accuracy and ask a more operational question:

How does this AI output influence human behaviour inside the workflow?

The Real Risk Is Often Not the Error Itself, but the System Around It

One of the biggest misconceptions in healthcare AI is the idea that if the model performs well enough, the deployment is safe enough.

That is not how real care environments work.

A clinically useful model can still create harm if it is introduced into the wrong workflow, shown to the wrong users, or used without enough safeguards.

This is a crucial point for healthcare leaders.

In many real-world incidents, the problem is not that the AI made a mistake in isolation. The problem is that the surrounding system allowed the mistake to matter too much.

- That includes:

- poor oversight,

- unclear ownership,

- weak escalation design,

- insufficient validation,

- or workflow dependence that quietly removes human skepticism.

In other words, the AI error is often only the visible part of a much larger implementation problem.

Who Is Responsible When Medical AI Makes a Mistake?

This is where many organizations become uncomfortable.

There is often a temptation to think of AI as an external layer, almost like a separate decision-maker. But in healthcare, responsibility does not disappear because software was involved.

If a hospital adopts an AI tool and integrates it into care delivery, responsibility still exists across the human and organizational system.

That does not mean one individual always takes the blame. It means accountability remains distributed across the people and structures that selected, approved, implemented, monitored, and acted on the tool.

Responsibility Usually Sits Across Multiple Layers

When a medical AI mistake causes harm or near-harm, the relevant questions usually include:

- Who approved the tool for use?

- Was it validated in the intended environment?

- Were clinicians trained properly?

- Was the output clearly interpretable?

- Was there enough human oversight?

- Did the workflow encourage over-reliance?

- Was the system being monitored for drift or failure?

Those are not “technical side questions.” They are the core of responsible AI deployment.

A healthcare organization that cannot answer them clearly is usually not ready to scale clinical AI safely.

Clinical Oversight Still Matters, But It Is Not a Complete Shield

Many organizations assume that as long as a clinician remains “in the loop,” the risk is under control.

That assumption is incomplete.

A clinician can only provide meaningful oversight if the workflow allows enough time, enough clarity, and enough authority to question the output. If the AI is embedded in a rushed, high-pressure environment and presented with high confidence, the presence of a human reviewer does not automatically eliminate risk.

Human oversight works only when it is real, not symbolic.

That means clinicians need:

- clear visibility into what the tool is doing,

- a reasonable ability to challenge it,

- and a workflow that does not quietly pressure them to defer to it.

Without that, “human-in-the-loop” becomes more of a legal comfort phrase than a true safety mechanism.

The Best Healthcare AI Systems Are Designed for Failure, Not Just Success

This is where mature healthcare AI programs begin to separate themselves from superficial adoption efforts.

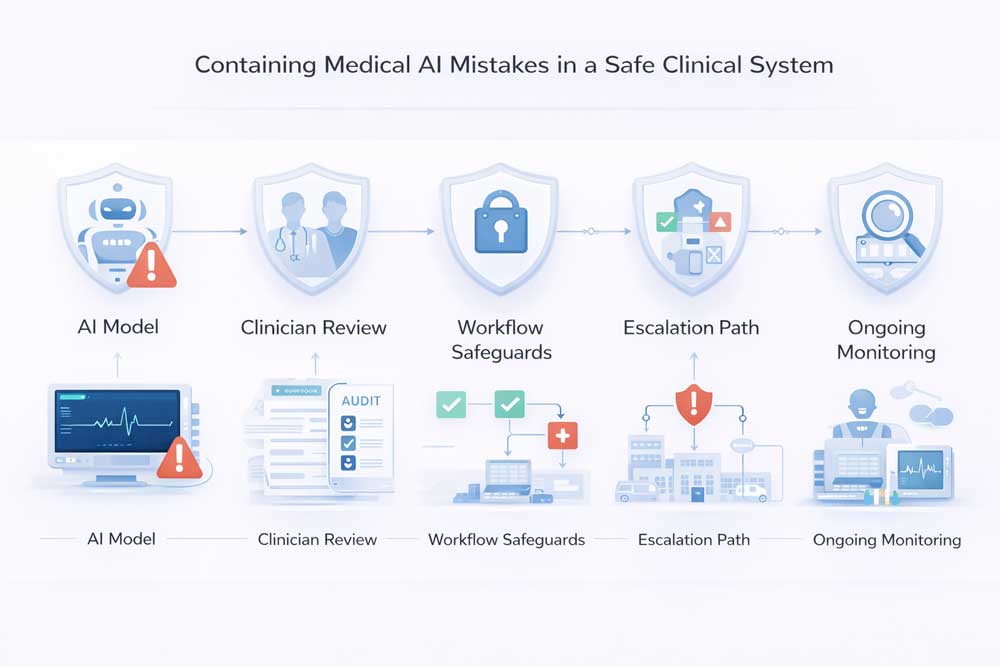

A safe AI deployment is not one that assumes the model will always work. It is one that assumes the model will sometimes fail and builds the workflow accordingly.

That means healthcare systems should be asking:

- What does failure look like here?

- How will we detect it?

- Who reviews it?

- How quickly do we intervene?

- How do we prevent repeat harm?

These questions should exist before go-live, not after an incident.

Strong AI Governance Starts Before the First Error

The safest organizations do not wait for a major failure before building response mechanisms.

They define acceptable use boundaries, clinical review responsibilities, escalation pathways, documentation expectations, and post-incident review processes from the start.

That is what turns AI from a risky experiment into a governable clinical tool.

Without that structure, even a high-performing model becomes difficult to trust over time.

Final Thoughts

When medical AI makes a mistake, the consequences depend on far more than the model itself.

The real outcome is shaped by the workflow around it, the level of clinical oversight, the quality of implementation, and the organization’s ability to detect and respond to failure. That is why healthcare AI safety is not only a model problem. It is a systems design problem.

For healthcare organizations, the goal should never be to find an AI tool that never fails. That standard does not exist in medicine. The real goal is to build a clinical environment where inevitable errors are caught early, interpreted correctly, and prevented from becoming patient harm.

That is where responsible AI adoption becomes operationally credible.

Frequently Asked Questions About Possible Mistakes of Medical AI

Can medical AI mistakes harm patients?

Yes. If an AI system misses a diagnosis, creates a misleading recommendation, or influences workflow in the wrong way, it can contribute to delayed treatment or poor clinical decisions.

Who is legally responsible when medical AI makes an error?

Responsibility usually depends on how the system was deployed and used. In most cases, accountability remains with the healthcare organization and the clinical workflow around the tool.

Are AI mistakes in healthcare common?

All AI systems make errors. The key issue is not whether mistakes happen, but whether the healthcare system is prepared to detect, review, and contain them safely.

Does having a clinician review the AI output solve the problem?

Not always. Human oversight only works when clinicians have enough clarity, authority, and time to challenge the output meaningfully.

How should hospitals reduce AI-related risk?

Hospitals should validate AI tools carefully, define clear governance, monitor performance over time, and design workflows that assume failure is possible.